|

How you use that memory determines how effectively you can use your data, making big data management the single most important part of working with relational databases. However, size is not as important as how you manage the memory that you use. The amount of memory that your Amazon Redshift Cluster uses is directly connected to the performance of your relational database. Optimizing memory management is important for running a successful Amazon Redshift cluster. Snappy is an order of magnitude faster than LZ4 but requires more CPU power to compress and decompress data, so it’s advisable to only use Snappy when necessary. Compression Tools Are AvailableĬompressing Amazon Redshift data can be done using two formats: LZ4 and Snappy. The larger your data lake is, the more effective compression can be at helping you make it usable in real-time. Advanced Compression Techniques Make Queries Much FasterĬompression can be much more complex than that, especially on the server-side of data warehouses.

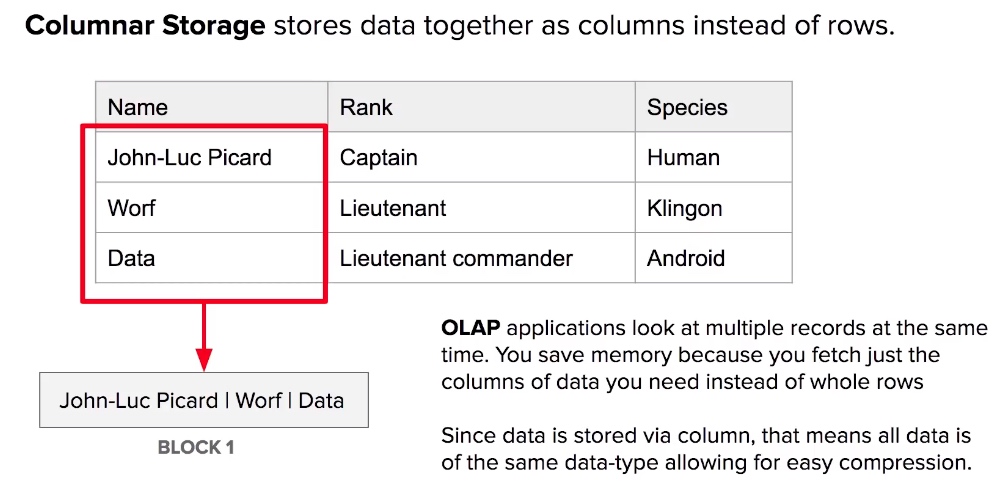

Simple compression methods, such as removing spaces, line breaks, and other unnecessary syntax or fixing sort keys reduce latency when sorting through that data. Compression essentially reduces code to its smallest possible memory usage for data storage and processing.įor example, code written with a lot of erroneous characters decreases throughput. Compression Minimizes That SlowdownĪpplying compression to your Amazon Redshift data and compute nodes can minimize that slowdown is another Amazon Redshift performance tip. Increasing the amount of columnar data in your data lake means that your queries may have to run against more sort keys to find what you are looking for. While Redshift can resize to cover the amount of data that you need relying on massively parallel processing (MPP), query execution for that data can become problematic. Compress Data Where PossibleĪmazon Redshift is a massive data warehouse using Amazon S3, and your database can grow exponentially over time. This can be helpful when building business intelligence that requires real-time updates to essential information.įor example, a company that needs to have updated stock information from other companies’ data sources, like NASDAQ, can aggregate real-time data to support investment decisions using Amazon S3. Put simply, you can use federated queries to aggregate data from other compute nodes. Pull Together Resources From Other Databases Federated queries are designed to let you extract, transform and load live data in outside databases from within your Amazon Redshift Spectrum database. Use Federated QueriesĪnother Amazon Redshift performance tip is to use federated queries. It can be one table that holds all of the results separating it from the rest of the data with distribution keys to make similar PostgreSQL queries faster in the future. Materialized views are so effective at speeding up the ETL process because they make data easier to work with.įor example, use cases where a company runs a query to find customer names by their email address are made easier by creating a materialized view to hold the results. You can create templates for the data that you want to retrieve using AWS services so that you can speed up the ETL process. Materialized views show the results of a query in a specific way. Many people don't know this, but Redshift can use pre-built materialized views to improve query performance and ease of use for data management purposes. Streamline the ETL Process with Integrate.io.Get Familiar With Redshift’s Data Types.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed